Multi-Stylization of Video Games in Real-Time guided by G-buffer Information

A. Saint-Denis,

K. Vanhoey,

T. Deliot

Presented by A. Saint-Denis at

High Performance Graphics 2019, July 1-3 2019, Strasbourg, France

A. Saint-Denis,

K. Vanhoey,

T. Deliot

Presented by A. Saint-Denis at

High Performance Graphics 2019, July 1-3 2019, Strasbourg, France

Abstract :

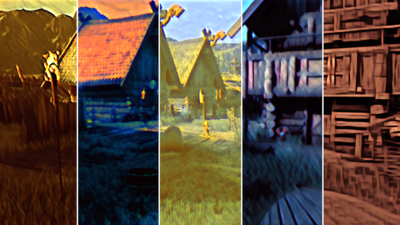

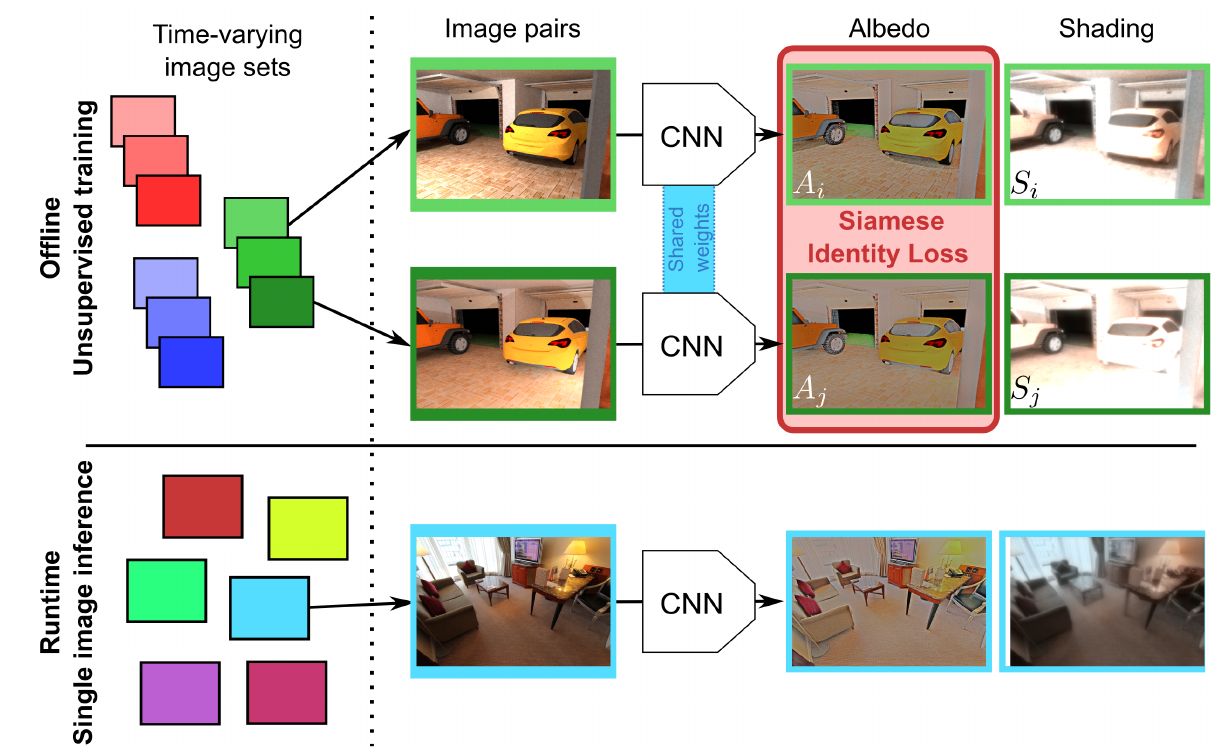

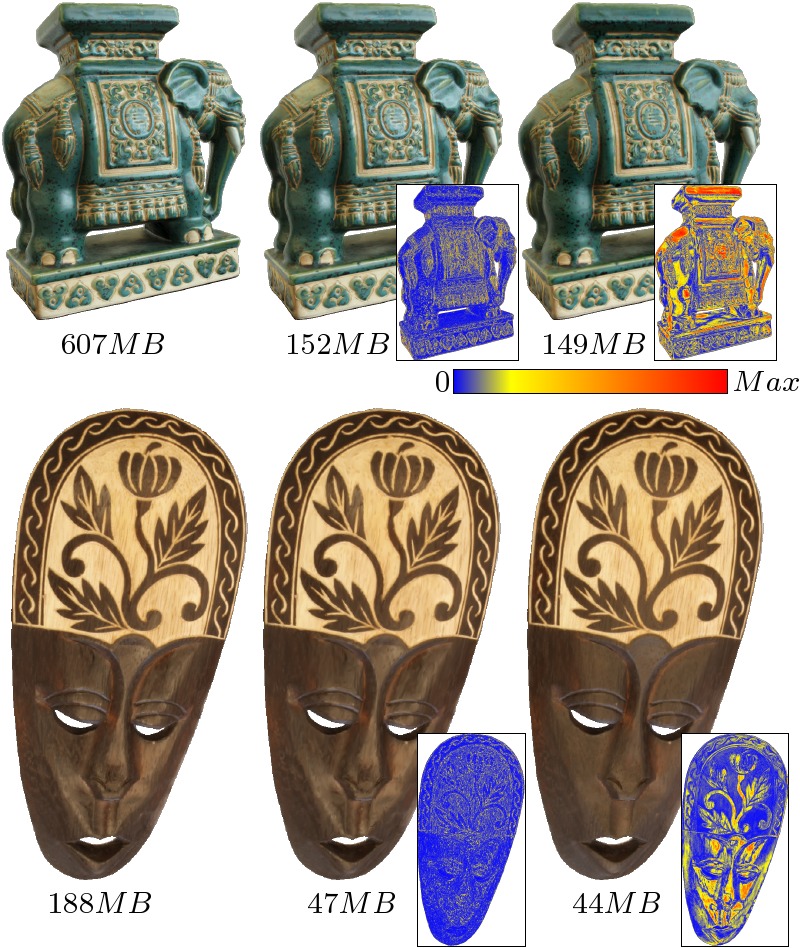

We investigate how to take advantage of modern neural style transfer techniques to modify the style of video games at runtime.

Recent style transfer neural networks are pre-trained, and allow for fast style transfer of any style at runtime.

However, a single style applies globally, over the full image, whereas we would like to provide finer authoring tools to the user.

In this work, we allow the user to assign styles (by means of a style image) to various physical quantities found in the G-buffer of a deferred rendering pipeline, like depth, normals, or object ID.

Our algorithm then interpolates those styles smoothly according to the scene to be rendered: e.g., a different style arises for different objects, depths, or orientations.